Artificial Intelligence (AI) is transforming industries across the world. From healthcare and education to finance and transportation, AI systems are helping organizations improve efficiency, automate processes, and make smarter decisions. However, as AI continues to advance, it also raises serious ethical concerns that businesses, governments, and society must address.

Understanding the ethical issues in artificial intelligence technology is essential because these systems influence human lives, decisions, privacy, and opportunities. While AI offers many benefits, responsible development and usage are necessary to prevent misuse and unintended consequences.

This article explores the major ethical challenges associated with AI technology and why ethical guidelines are important for the future of innovation.

What Is Artificial Intelligence?

Artificial Intelligence refers to computer systems that can perform tasks that normally require human intelligence. These tasks include learning from data, recognizing patterns, understanding language, and making decisions.

AI technologies include:

- Machine learning

- Natural language processing

- Computer vision

- Robotics

- Predictive analytics

These systems are increasingly integrated into everyday tools such as search engines, recommendation systems, smart assistants, and automated customer service platforms.

Why Ethics Matter in Artificial Intelligence

Ethics in AI focuses on ensuring that technology is used responsibly, fairly, and safely. Because AI systems often analyze large amounts of data and influence decisions, ethical problems can arise if these systems are not carefully designed.

Some key reasons ethics are important include:

- Protecting user privacy

- Preventing discrimination

- Ensuring transparency

- Avoiding misuse of technology

- Maintaining human control over machines

Without ethical considerations, AI could create social and economic challenges instead of improving human life.

Major Ethical Issues in Artificial Intelligence

1. Bias and Discrimination

One of the most significant ethical concerns in AI is algorithmic bias. AI systems learn from data, and if the data used to train these systems contains biases, the AI can produce unfair or discriminatory outcomes.

For example, biased AI systems may:

- Favor certain groups over others

- Make unfair hiring decisions

- Provide unequal access to services

- Reinforce existing social inequalities

Ensuring fair and diverse datasets is essential to reduce this problem.

2. Privacy and Data Protection

AI systems rely heavily on data to function effectively. However, collecting and analyzing personal data raises privacy concerns.

Potential privacy risks include:

- Unauthorized data collection

- Misuse of personal information

- Data breaches

- Lack of user consent

Organizations must follow strong data protection policies and ensure users understand how their data is being used.

3. Lack of Transparency

Many AI systems operate as “black boxes,” meaning it can be difficult to understand how they make decisions. This lack of transparency creates ethical concerns, especially in areas like healthcare, banking, and law enforcement.

If people cannot understand how decisions are made, it becomes harder to:

- Identify errors

- Ensure fairness

- Build trust in technology

- Hold systems accountable

Developers are now working on explainable AI models to make decision-making processes clearer.

4. Job Displacement and Economic Impact

AI-driven automation can replace certain jobs, especially repetitive and manual tasks. While AI also creates new opportunities, the transition may lead to unemployment and economic inequality if not managed properly.

Some industries affected include:

- Manufacturing

- Customer service

- Transportation

- Data processing

Governments and organizations must focus on reskilling workers and preparing them for new technology-driven roles.

Ethical AI Development Principles

To address these concerns, many organizations follow ethical AI principles when designing and implementing technology.

Common principles include:

| Ethical Principle | Description |

|---|---|

| Fairness | AI systems should treat all individuals equally and avoid bias |

| Accountability | Developers and organizations must take responsibility for AI decisions |

| Transparency | AI processes should be understandable and explainable |

| Privacy Protection | Personal data must be handled securely and responsibly |

| Safety | AI systems should not harm individuals or society |

These principles help guide responsible innovation in artificial intelligence.

5. Security Risks and Misuse

AI technology can also be used for harmful purposes if it falls into the wrong hands. Cybercriminals may use AI to create sophisticated attacks, manipulate information, or exploit systems.

Possible misuse includes:

- Deepfake technology

- Automated hacking tools

- Misinformation campaigns

- Surveillance abuse

Developing strong cybersecurity strategies is essential to reduce these risks.

6. Autonomous Decision-Making

Some AI systems are designed to operate with minimal human intervention. While this increases efficiency, it also raises concerns about control and accountability.

Important ethical questions include:

- Who is responsible if AI makes a mistake?

- Should machines make life-impacting decisions?

- How much autonomy should AI systems have?

Maintaining human oversight is important in sensitive areas such as healthcare and law enforcement.

The Role of Governments and Regulations

Governments around the world are beginning to develop laws and regulations to ensure ethical AI usage. These regulations aim to protect citizens while encouraging innovation.

Regulatory efforts often focus on:

- Data protection laws

- AI transparency standards

- Ethical development guidelines

- Risk assessment frameworks

Clear regulations help organizations use AI responsibly while maintaining public trust.

AI Ethics in Business and Technology Companies

Technology companies play a major role in shaping how AI is used in society. Businesses must consider ethical practices when developing AI-powered products and services.

This includes:

- Conducting ethical risk assessments

- Monitoring AI performance

- Preventing algorithmic bias

- Ensuring data security

- Being transparent with users

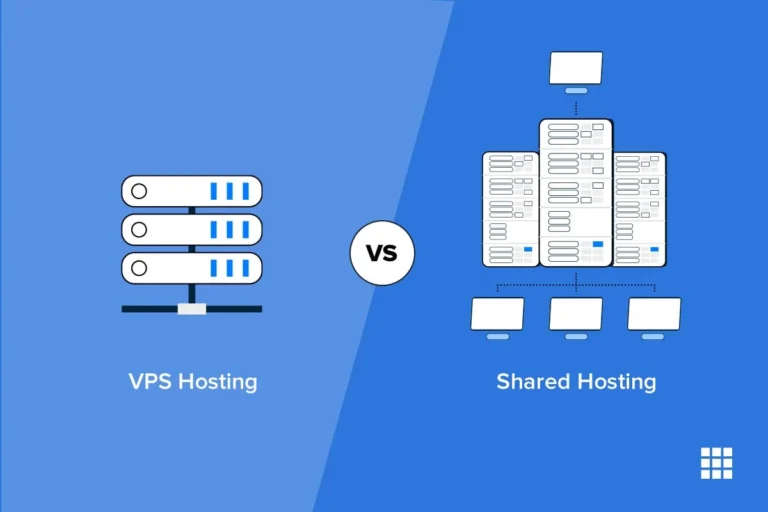

As companies build digital infrastructure and online platforms, discussions about technology management, such as VPS Hosting vs Shared Hosting for Startups, often appear alongside broader conversations about responsible technology deployment.

Future Challenges in AI Ethics

As artificial intelligence continues to evolve, new ethical challenges will emerge. Some of the future concerns include:

- AI in military systems

- Advanced surveillance technologies

- Human-like AI decision-making

- Control over superintelligent systems

- AI impact on democracy and society

Researchers and policymakers must work together to address these issues before they become major problems.

FAQs About Ethical Issues in Artificial Intelligence

1. What are ethical issues in artificial intelligence?

Ethical issues in AI refer to concerns about fairness, privacy, accountability, transparency, and the impact of AI systems on society.

2. Why is AI bias a problem?

AI bias can lead to unfair decisions that discriminate against certain individuals or groups, which can create social inequality.

3. Can artificial intelligence threaten jobs?

AI can automate certain tasks, which may reduce some jobs. However, it also creates new roles in technology, data science, and AI management.

4. How can companies ensure ethical AI use?

Companies can follow ethical guidelines, use unbiased data, monitor AI systems regularly, and maintain transparency with users.

5. Will governments regulate artificial intelligence?

Yes, many governments are already developing policies and laws to ensure AI technologies are used responsibly and safely.

Conclusion

Artificial intelligence is one of the most powerful technologies of the modern era. While it offers significant benefits, it also introduces complex ethical challenges that must be addressed carefully. Issues such as bias, privacy, transparency, and security require attention from developers, businesses, and policymakers.